Software architecture is the backbone of any robust application. When teams invest time in Object-Oriented Analysis and Design (OOAD), the goal is to create systems that are maintainable, scalable, and resilient. However, a design document or a set of class diagrams is only as good as the scrutiny it withstands. A design review is not merely a formality; it is a critical checkpoint to identify flaws before implementation begins. This guide provides a comprehensive, practical checklist for conducting effective object-oriented design reviews.

By adhering to structured evaluation criteria, teams can reduce technical debt, improve code quality, and ensure that the system aligns with business requirements. The following sections detail the essential areas to examine, supported by specific questions and criteria to guide your review process.

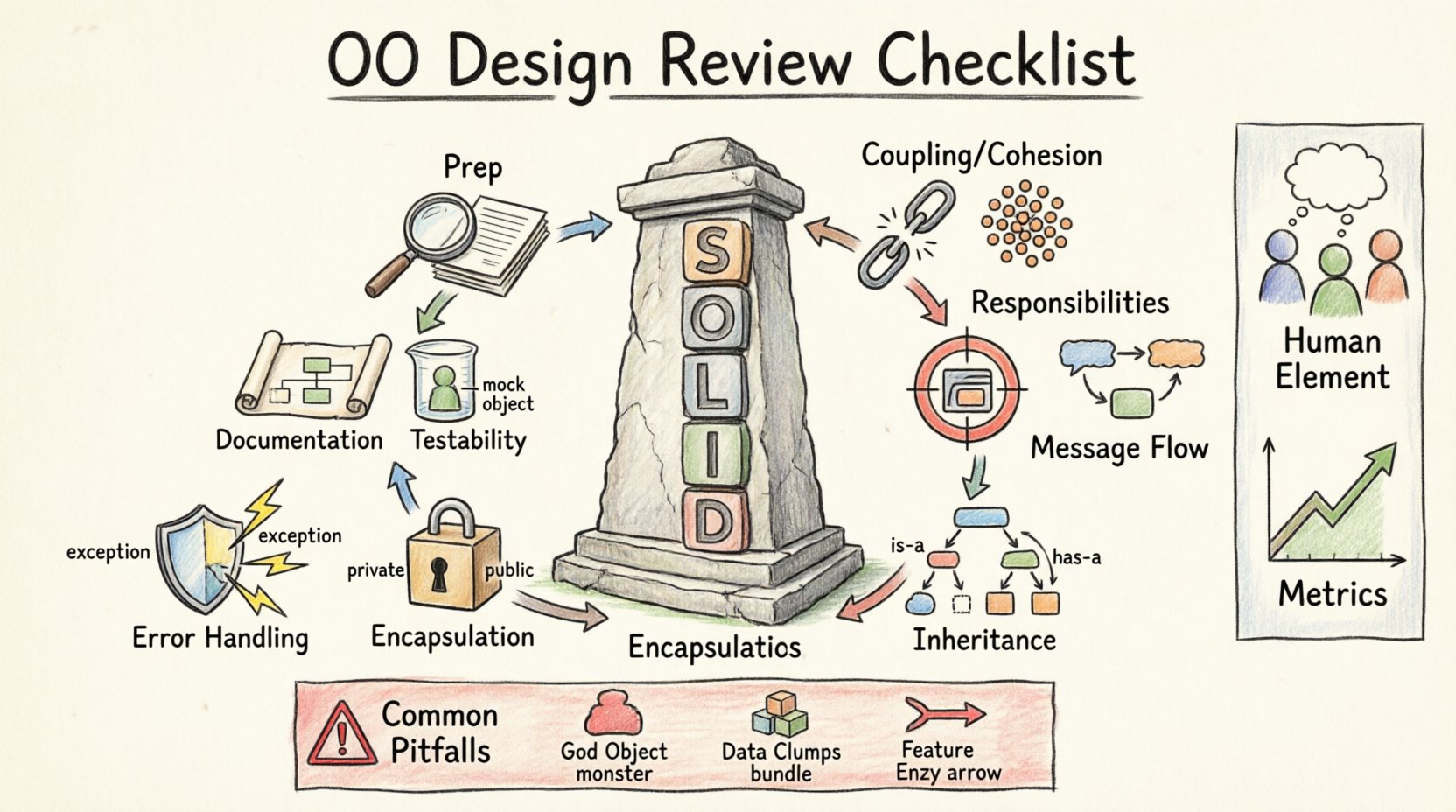

1. Pre-Review Preparation 📋

Before diving into the technical specifics, ensure the review environment is set for success. A chaotic review leads to missed details. Preparation determines the efficiency of the session.

- Define the Scope: Clearly outline which components are under review. Is this a high-level architecture review or a deep dive into specific class implementations?

- Gather Materials: Ensure all UML diagrams, sequence charts, and requirement specifications are accessible to reviewers.

- Set Expectations: Establish the goals of the review. Are we looking for performance bottlenecks, security vulnerabilities, or maintainability issues?

- Assign Roles: Designate a moderator to keep the discussion focused and a scribe to record decisions and action items.

2. Adherence to SOLID Principles ✅

The SOLID principles form the foundation of object-oriented design. During a review, examine the design against these five core tenets to ensure long-term stability.

Single Responsibility Principle (SRP)

Every class should have one, and only one, reason to change. Reviewers should look for classes that seem to do too much.

- Check if a class handles both data storage and business logic.

- Identify classes that manage multiple distinct concerns, such as logging and validation.

- Ensure that if a requirement changes, only one class is affected.

Open/Closed Principle (OCP)

Software entities should be open for extension but closed for modification. This reduces the risk of introducing bugs when adding new features.

- Look for extensive use of

if-elseorswitchstatements that depend on object types. - Verify that new functionality is added via new classes or interfaces rather than altering existing code.

- Ensure that existing behavior is not broken by new additions.

Liskov Substitution Principle (LSP)

Objects of a superclass should be replaceable with objects of its subclasses without breaking the application.

- Check if subclasses adhere to the contract of the parent class.

- Look for overridden methods that throw unexpected exceptions.

- Ensure that preconditions are not strengthened and postconditions are not weakened in derived classes.

Interface Segregation Principle (ISP)

Clients should not be forced to depend on interfaces they do not use. Avoid large, monolithic interfaces.

- Review if interfaces contain methods that are irrelevant to certain implementers.

- Ensure that clients only know about the methods they actually invoke.

- Break down large interfaces into smaller, role-specific ones.

Dependency Inversion Principle (DIP)

High-level modules should not depend on low-level modules. Both should depend on abstractions.

- Check for tight coupling between high-level business logic and low-level database or UI code.

- Verify that dependencies are injected rather than instantiated directly within the class.

- Ensure that the design relies on interfaces or abstract classes for dependencies.

3. Coupling and Cohesion 🔗

Two critical metrics for design health are coupling and cohesion. High cohesion and low coupling lead to modular, flexible systems.

Evaluating Coupling

Coupling refers to the degree of interdependence between software modules. You want loose coupling.

- Direct Instantiation: Avoid creating concrete instances of dependencies directly within a class.

- Data Dependencies: Check if objects are passing large data structures that contain information only some methods need.

- Global State: Minimize reliance on global variables or singletons that create hidden dependencies.

Evaluating Cohesion

Cohesion measures how closely related the responsibilities of a class are. You want high cohesion.

- Logical Cohesion: Ensure all methods in a class contribute to a single, well-defined purpose.

- Temporal Cohesion: Be wary of classes that group operations simply because they happen at the same time.

- Functional Cohesion: Aim for this level, where every part of the class is necessary for the class’s primary function.

4. Class Responsibilities & Single Responsibility 🎯

Assigning responsibilities clearly is vital. If a class does not know its job, it will fail when the requirements shift.

- Public Interface: Is the public interface minimal? Does it expose too much internal state?

- Method Granularity: Are methods too large? A method doing too much often indicates a class that is doing too much.

- State Management: Does the class manage its own state correctly, or does it rely on external objects to track its status?

5. Interaction and Message Flow 🔄

Objects communicate via messages. Understanding the flow of data and control is essential for performance and correctness.

- Sequence Diagrams: Review these to ensure the flow makes sense logically.

- Circular Dependencies: Ensure Class A does not depend on Class B, which depends back on Class A.

- Feedback Loops: Check for infinite loops or recursive calls that lack proper termination conditions.

- Interface Contracts: Verify that the sender of a message understands the receiver’s capabilities.

6. Inheritance and Polymorphism 🧬

Inheritance is a powerful tool but should be used judiciously. Improper inheritance hierarchies can make refactoring difficult.

- Depth of Hierarchy: Avoid deep inheritance trees. Three levels is usually the maximum recommended.

- Is-a vs Has-a: Ensure inheritance represents an

is-arelationship. Use composition forhas-arelationships. - Polymorphic Behavior: Ensure that polymorphism is used to handle different behaviors, not just to organize code.

- Fragile Base Class: Check if changes to a base class could break multiple subclasses unexpectedly.

7. Encapsulation and Visibility 🔒

Encapsulation hides internal implementation details. This protects the integrity of the data.

- Access Modifiers: Are fields private? Are getters and setters necessary, or should the data be immutable?

- Internal State: Can external code modify the internal state of an object without going through the class methods?

- Public Methods: Do public methods expose internal implementation details that should remain hidden?

8. Error Handling and State Management ⚠️

Robust systems handle failures gracefully. A design review must scrutinize how errors are managed.

- Exception Propagation: Are exceptions caught and handled, or are they swallowed silently?

- State Consistency: If an operation fails mid-way, does the object remain in a valid state?

- Recovery Strategies: Is there a mechanism to recover from transient failures?

- Logging: Is there adequate logging for debugging without exposing sensitive data?

9. Testability Considerations 🧪

If a design is hard to test, it is likely hard to maintain. Testability should be a primary criterion.

- Mocking: Can dependencies be easily mocked for unit testing?

- Isolation: Can a class be tested in isolation from the database or network?

- Side Effects: Do methods produce side effects that make testing difficult?

- Setup Complexity: Does creating an instance of the class require extensive setup code?

10. Documentation Clarity 📝

Documentation bridges the gap between design and implementation. It must be clear and concise.

- Javadoc/Comments: Are public methods documented with clear explanations of purpose, parameters, and return values?

- Design Rationale: Is there documentation explaining why certain design decisions were made?

- Consistency: Is the terminology consistent across diagrams and code comments?

- Diagrams: Are the diagrams up to date with the actual design?

Master Checklist Table 📊

Use this table as a quick reference during the review session. Mark items as Pass, Fail, or Needs Revision.

| Category | Checklist Item | Pass/Fail | Notes |

|---|---|---|---|

| SRP | Does each class have only one reason to change? | ||

| OCP | Is the code open for extension without modification? | ||

| Coupling | Are dependencies minimized and injected? | ||

| Cohesion | Are class responsibilities tightly related? | ||

| Encapsulation | Is internal state protected from external modification? | ||

| Testability | Can the class be unit tested in isolation? | ||

| Interface | Are interfaces minimal and client-specific? | ||

| Documentation | Are diagrams and comments up to date? | ||

| Error Handling | Are failure scenarios handled gracefully? | ||

| Inheritance | Is inheritance used only for is-a relationships? |

Common Pitfalls to Avoid 🚫

Even with a checklist, certain patterns frequently slip through. Be vigilant against these common issues.

- God Objects: Classes that know everything and do everything. These become bottlenecks for change.

- Data Clumps: Groups of data that always appear together but are scattered across different objects. Consider bundling them into a value object.

- Feature Envy: A method that uses more methods from another class than its own. Move the method to the class it uses most.

- Primitive Obsession: Using primitive types (like strings or integers) for complex concepts. Create value objects instead.

- Switch Statements: Using

switchstatements to handle types. Use polymorphism to replace these.

The Human Element of Design Reviews 👥

Technical correctness is only half the battle. The social dynamics of a review impact its success.

- Psychological Safety: Ensure reviewers feel safe to criticize the design without attacking the designer.

- Constructive Feedback: Focus on the code and design, not the person. Use “we” language where possible.

- Time Management: Keep the meeting on track. If a discussion goes off-topic, park it for later.

- Follow-up: Assign action items with owners and deadlines. A review without follow-through is wasted time.

Metrics for Continuous Improvement 📈

To ensure the review process itself is effective, track metrics over time.

- Defect Density: How many bugs are found in production that could have been caught in the design review?

- Review Cycle Time: How long does it take to complete a review from start to finish?

- Rework Rate: How often does the design need to be revisited after implementation starts?

- Team Satisfaction: Do developers feel the reviews add value to their work?

Final Thoughts on Quality Assurance 💡

Implementing a rigorous object-oriented design review process requires commitment. It is not about finding fault, but about building confidence in the system. By systematically applying the checklist above, teams can ensure that their software architecture remains solid as the requirements evolve.

Remember that design is iterative. A perfect design does not exist at the start. The goal is to make informed decisions that reduce risk and increase maintainability. Regular reviews create a culture of quality where technical debt is managed proactively rather than reactively. This approach leads to systems that stand the test of time and change.

Start with these principles today. Apply the checklist to your next project. Observe the improvements in code stability and team velocity. The path to robust software is paved with careful, deliberate design reviews.