Evaluating the quality of an object-oriented design is a critical skill for any software architect or developer. A well-structured design ensures that software remains maintainable, scalable, and adaptable to changing requirements over time. In the field of Object-Oriented Analysis and Design (OOAD), the focus shifts from simply making code work to making code work well. This guide provides a comprehensive framework for assessing design quality without relying on hype or shortcuts.

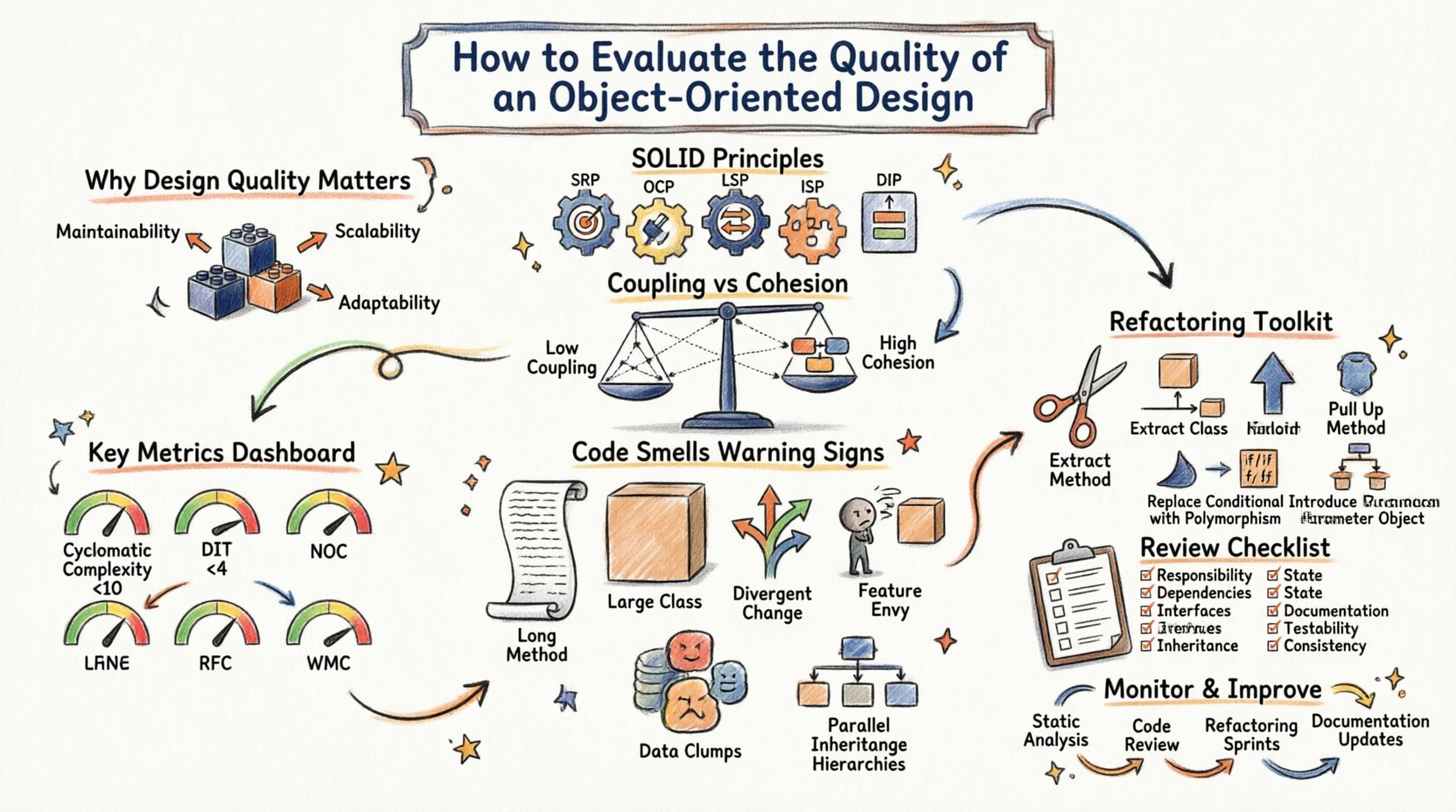

Why Design Quality Matters 🏗️

Code is read far more often than it is written. When an object-oriented system is poorly designed, developers spend excessive time debugging, refactoring, or avoiding certain features due to structural complexity. High-quality design reduces the cognitive load on the team. It creates a system where changes in one area have minimal, predictable ripple effects in others.

Evaluation is not just about finding bugs; it is about predicting future effort. A robust design anticipates change. It separates concerns so that business logic can evolve without breaking the underlying infrastructure. When you assess a design, you are essentially auditing the long-term health of the software product.

The Core Pillars of Object-Oriented Design 🧱

To evaluate quality effectively, you must understand the foundational principles that guide good architecture. These principles act as the criteria against which you measure your system. While there are many patterns, a few core concepts stand out as non-negotiable for high-quality design.

1. The SOLID Principles ⚙️

The SOLID acronym represents five principles that promote maintainability and flexibility. Each letter stands for a specific guideline that, when followed, leads to better class structures.

- Single Responsibility Principle (SRP): A class should have one, and only one, reason to change. If a class handles both database operations and user interface logic, it violates this principle. High cohesion within a class is a key indicator of SRP compliance.

- Open/Closed Principle (OCP): Software entities should be open for extension but closed for modification. You should be able to add new functionality without altering existing source code. This is often achieved through interfaces and polymorphism.

- Liskov Substitution Principle (LSP): Objects of a superclass should be replaceable with objects of its subclasses without breaking the application. If a subclass behaves unexpectedly when used in place of the parent, the hierarchy is flawed.

- Interface Segregation Principle (ISP): Clients should not be forced to depend on methods they do not use. Large, monolithic interfaces should be split into smaller, specific ones. This reduces coupling between components.

- Dependency Inversion Principle (DIP): High-level modules should not depend on low-level modules. Both should depend on abstractions. This decouples the system, allowing for easier testing and swapping of implementations.

2. Coupling and Cohesion 🔗

These two metrics are the most direct indicators of design health. They are inversely related; generally, as coupling decreases, cohesion increases.

- Coupling: The degree of interdependence between software modules. Low coupling is desirable. It means changes in one module do not require changes in another. High coupling creates a web of dependencies that makes refactoring risky.

- Cohesion: The degree to which elements inside a module belong together. High cohesion means a class or module performs a well-defined, single task. Low cohesion implies a class is doing too many unrelated things, often a sign of the “God Class” anti-pattern.

Key Metrics for Quantitative Analysis 📊

While principles provide qualitative guidance, metrics offer quantitative data. Static analysis tools often calculate these values to highlight potential problem areas. Below are the most relevant metrics for object-oriented evaluation.

| Metric | What it Measures | Desired State | Implication |

|---|---|---|---|

| Cyclomatic Complexity | Number of independent paths through code | Low (e.g., < 10) | High complexity increases testing effort and bug risk. |

| Depth of Inheritance Tree (DIT) | Number of ancestors a class has | Low (e.g., < 4) | Deep trees make understanding behavior difficult. |

| Number of Children (NOC) | Number of subclasses inheriting from a class | Variable | Too few may indicate missed abstraction; too many may indicate over-engineering. |

| Response for a Class (RFC) | Number of methods that can be invoked on an object | Low to Moderate | High RFC suggests the class is doing too much. |

| Weighted Methods per Class (WMC) | Sum of complexity of all methods in a class | Low | Indicates how difficult the class is to understand and test. |

When reviewing these metrics, context is king. A high WMC might be acceptable for a complex domain model, whereas a low WMC is expected for a simple data container. The goal is to identify outliers that deviate significantly from the norm within the project.

Identifying Code Smells 🚨

Code smells are surface-level indicators of deeper problems in the design. They are not bugs, but they suggest that the design is starting to degrade. Recognizing these patterns early allows for proactive refactoring.

- Long Method: A function that is too large to understand easily. It should be broken down into smaller, named methods.

- Large Class: A class with too many responsibilities. It is often a sign that SRP has been violated.

- Divergent Change: A class that changes for many different reasons. This indicates a lack of cohesion.

- Feature Envy: A method that uses more data from another class than from its own. The method should likely belong to the class it is obsessed with.

- Data Clumps: Groups of data that always appear together. These should be bundled into their own object or structure.

- Parallel Inheritance Hierarchies: If you add a subclass to one hierarchy, you must add one to another. This creates a tight coupling between class hierarchies.

Refactoring Strategies for Improvement 🔧

Once an evaluation identifies issues, the next step is improvement. Refactoring is the process of changing a software system’s internal structure without changing its external behavior. It is the primary tool for maintaining design quality over time.

Common Refactoring Techniques

- Extract Method: Take a chunk of code within a method and turn it into a new method. This reduces duplication and improves readability.

- Extract Class: Move some fields and methods to a new class. This helps separate concerns and reduce class size.

- Pull Up Method: Move a method from a subclass to a superclass. This promotes code reuse and adheres to the Liskov Substitution Principle.

- Replace Conditional Logic with Polymorphism: Instead of using

if/elsestatements to handle different types, create specific methods in subclasses. This supports the Open/Closed Principle. - Introduce Parameter Object: Group parameters that often appear together into a single object. This simplifies method signatures.

Trade-offs and Contextual Decisions ⚖️

Design is rarely black and white. There are often trade-offs between performance, readability, and complexity. A design that is perfectly decoupled might introduce overhead that affects performance. A design that is highly optimized might be difficult to understand.

- Performance vs. Maintainability: Sometimes, strict adherence to design principles can add layers of indirection. In performance-critical sections, it may be acceptable to relax these rules for direct execution.

- Complexity vs. Simplicity: Over-simplifying a domain model can hide important business rules. Conversely, over-engineering a simple script adds unnecessary maintenance burden.

- Time vs. Quality: In tight deadlines, teams might introduce technical debt. The evaluation process should track this debt and schedule time to pay it back before it compounds.

A Practical Review Checklist ✅

When conducting a design review, use the following checklist to ensure all aspects of quality are covered. This helps standardize the evaluation process across the team.

- Responsibility: Does every class have a clear, single purpose?

- Dependencies: Are dependencies injected or created locally? Are they minimized?

- Interfaces: Are interfaces specific to client needs?

- Inheritance: Is inheritance used for behavior reuse rather than just implementation details?

- State: Is state encapsulated? Is it mutable only where necessary?

- Documentation: Is the design intent clear through comments or documentation?

- Testability: Can the components be tested in isolation?

- Consistency: Does the naming and structure follow the established conventions of the project?

The Human Element of Design 👥

Automated tools and metrics are helpful, but they cannot capture everything. The human element plays a significant role in design quality. A design that is technically perfect might fail if the team cannot understand it.

- Team Knowledge: A design should leverage the team’s existing skills. Introducing complex patterns unnecessarily can slow down onboarding.

- Communication: Good design facilitates communication. Clear boundaries between modules allow different teams to work in parallel without stepping on each other’s toes.

- Feedback Loops: Regular code reviews are essential. They provide a forum to discuss design decisions and share knowledge.

Monitoring Design Health Over Time 📈

Evaluation is not a one-time event. Software evolves, and design quality can degrade. Continuous monitoring ensures that the system remains healthy.

- Static Analysis Integration: Integrate analysis tools into the build pipeline to catch violations early.

- Code Review Policies: Require design discussions for significant changes.

- Refactoring Sprints: Dedicate specific time to address technical debt and improve structure.

- Documentation Updates: Ensure architecture diagrams are updated as the system changes.

Conclusion on Evaluation Practices 🎯

Evaluating object-oriented design is an ongoing discipline. It requires a balance of theoretical knowledge, practical metrics, and human judgment. By focusing on principles like SOLID, monitoring coupling and cohesion, and watching for code smells, teams can build systems that stand the test of time. The goal is not perfection, but continuous improvement and resilience against change.

Remember that the best design is the one that solves the problem effectively while remaining understandable by the people who must maintain it. Prioritize clarity and simplicity, and let the metrics support those goals rather than dictate them.