The landscape of digital interaction has shifted dramatically. We are no longer simply building interfaces; we are constructing environments that shape behavior, influence decisions, and handle sensitive personal information. As designers and product creators, the weight of this responsibility carries significant ethical implications. The question is no longer just “Can we build this feature?” but “Should we build this feature, and how does it impact the human experience?”

Ethical UX design goes beyond compliance. It is about respect for the user, transparency in data practices, and a commitment to inclusivity. Ignoring these factors leads to erosion of trust, potential legal repercussions, and social harm. This guide explores the critical pillars of ethical design, focusing on privacy and bias, and provides actionable strategies for integrating these values into your workflow.

🔒 Privacy in UX: More Than Just Compliance 📋

Privacy is often treated as a legal checkbox, a hurdle to clear before launch. However, in the context of user experience, privacy is a core component of trust. Users are increasingly aware of how their data is collected and utilized. When a digital product feels intrusive, the relationship between the brand and the user fractures.

The Psychology of Data Collection

Every data point requested from a user represents a micro-decision. Why does this app need my location? Why is the search history saved? When designers bury these questions behind vague terms or pre-ticked boxes, they manipulate the user into giving consent they might not otherwise grant. Ethical design demands clarity.

- Granularity: Avoid asking for all permissions at once. Request location access only when a feature requiring it is active.

- Justification: Explain why data is needed before asking for it. “We need your email to send your receipt” is better than “Enter your email for account creation.”

- Default Settings: The default should always be the most privacy-conscious option. Opt-out mechanisms should be visible, not hidden.

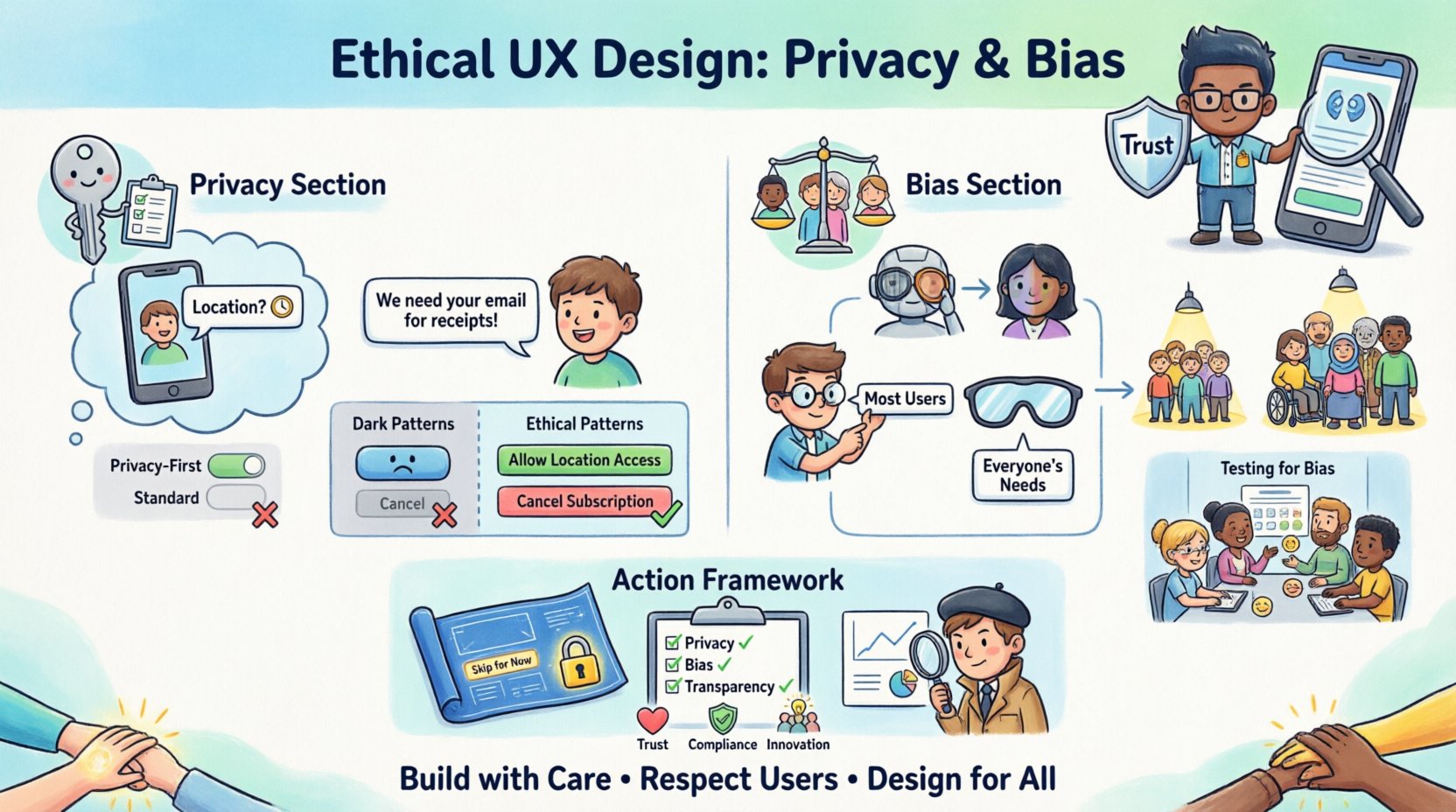

Dark Patterns vs. Ethical Patterns

Some design choices are intended to trick users into actions they did not intend. These are known as dark patterns. While they may drive short-term metrics, they damage long-term brand equity. Ethical design rejects these tactics in favor of transparency.

| Dark Pattern | Ethical Alternative |

|---|---|

| Confirm Shaming Using guilt or shame to force a choice. |

Neutral Language Presenting options without emotional manipulation. |

| Roach Motel Making it easy to sign up but hard to cancel. |

Equal Access Ensuring cancellation is as simple as sign-up. |

| Hidden Costs Adding fees late in the checkout process. |

Full Disclosure Showing all costs upfront before commitment. |

| Forced Continuity Making it difficult to cancel a subscription. |

Clear Termination Providing a straightforward path to stop service. |

Data Minimization and Purpose Limitation

Collecting data “just in case” is a fundamental violation of privacy ethics. Ethical design adheres to the principle of data minimization. This means collecting only what is strictly necessary for the specific function being performed.

Furthermore, purpose limitation dictates that data collected for one reason cannot be repurposed for another without new consent. For example, using browsing data collected for site optimization to target ads requires explicit user permission. Designers must build interfaces that reflect these boundaries, ensuring users understand the lifecycle of their information.

⚖️ Bias in UX: Identifying and Removing Exclusion ⚖️

Bias in digital products is rarely malicious, but it is pervasive. It stems from the homogeneity of design teams, the limitations of training data, and the assumptions made about user behavior. When a product assumes a specific user profile, it alienates everyone else. This exclusion creates barriers to access and reinforces societal inequalities.

Types of Bias in Design

- Algorithmic Bias: AI and machine learning models learn from historical data. If that data contains prejudices, the output will reflect them. For instance, image recognition software that struggles to identify people with darker skin tones.

- Confirmation Bias: Designers may interpret user feedback in a way that confirms their existing beliefs, ignoring data that contradicts their assumptions.

- Availability Heuristic: Relying on the most readily available examples of users (often those closest to the design team) rather than a diverse representative sample.

Representation in Visual and Interaction Design

Visual representation matters. If the icons, images, and avatars used in an interface only depict a narrow demographic, users from other backgrounds may feel unwelcome or invisible. This extends beyond imagery to the language used in copy and the color palettes chosen.

Color contrast is not just an accessibility issue; it is an equity issue. Ensuring text is readable for users with low vision or color blindness allows more people to participate fully in the digital experience. Similarly, input fields that assume Western naming conventions or address formats can frustrate users from different cultural backgrounds.

Testing for Bias

Usability testing is the primary tool for identifying bias, but it requires intentionality. Standard testing often involves recruiting users who fit the “typical” profile. To uncover bias, you must actively seek out diverse participants.

- Diverse Recruitment: Ensure test groups vary in age, ability, ethnicity, gender, and technical literacy.

- Scenario Testing: Ask users to complete tasks that might expose cultural or contextual friction points.

- Feedback Loops: Create channels for users to report bias or exclusion they experience within the product.

🛠️ Implementing Ethical Frameworks in Your Workflow

Integrating ethics into the design process requires structure. Relying on individual conscience is not enough. Teams need frameworks and checklists to ensure ethical considerations are addressed at every stage.

The Ethical Design Checklist

Before any feature ships, run it through a standardized review. This checklist ensures that privacy and bias are not afterthoughts.

- Privacy:

- Is data collection minimized to the absolute minimum?

- Are consent mechanisms clear and unambiguous?

- Can users easily access and delete their data?

- Bias:

- Have we tested with a diverse range of users?

- Do the images and icons represent inclusivity?

- Is the language neutral and free of stereotypes?

- Transparency:

- Are the terms of service understandable?

- Is the purpose of every feature explained?

- Are errors and limitations communicated honestly?

Privacy by Design

This concept involves embedding privacy into the architecture of the system rather than adding it as an afterthought. In the design phase, this means planning for data deletion, encryption, and access controls. It means designing interfaces that make privacy the default path.

Bias Audits

Regular audits of the product can reveal hidden biases. This involves reviewing code, data sets, and user interactions for patterns of exclusion. If a feature is used significantly less by a specific demographic, it warrants investigation. Is the feature broken for them, or are they choosing not to use it due to design friction?

📈 The Business Case for Ethical UX

Some stakeholders view ethics as a cost center, a barrier to growth or speed. However, ethical design is a strategic asset. In an era where data breaches and algorithmic scandals make headlines, trust is a differentiator.

Trust and Retention

Users are more likely to return to a platform they trust. If a user feels their data is safe and the product respects their boundaries, they are more likely to engage deeply. Conversely, a single instance of unethical behavior can lead to mass churn and reputational damage that takes years to repair.

Regulatory Compliance

Laws regarding data protection and digital accessibility are tightening globally. Designing with ethics in mind ensures compliance with regulations like GDPR, CCPA, and WCAG. This reduces legal risk and avoids costly redesigns later in the development cycle.

Innovation through Inclusivity

Designing for the margins often leads to better solutions for the center. When you design for users with disabilities, you often improve the experience for everyone. When you account for diverse cultural contexts, you open up new markets. Ethical design expands the potential audience rather than limiting it.

🔮 Future Trends in Ethical Design

The conversation around ethics is evolving rapidly. As technology advances, new challenges emerge that require proactive design thinking.

Artificial Intelligence and Automation

As AI becomes more integrated into user interfaces, the risk of opaque decision-making increases. Users need to understand when they are interacting with a machine and how that machine makes decisions. Explainable AI (XAI) will become a standard requirement in UX design.

Emotional and Biometric Data

Wearables and cameras allow products to collect biometric and emotional data. This is highly sensitive information. Designers will need to create new paradigms for consent and storage when dealing with this level of personal insight. The line between tool and monitor must remain clear.

Regulatory Evolution

Legislation will continue to catch up with technology. Future designs may need to include built-in mechanisms for “right to be forgotten” that are more robust than simple delete buttons. Data portability will also become a standard expectation, requiring users to easily move their data between platforms.

🤝 Building a Culture of Responsibility

Technical solutions alone cannot fix ethical issues. A culture of responsibility must exist within the organization. This means empowering designers and product managers to say no to features that violate ethical standards. It requires leadership that values long-term trust over short-term gains.

Education and Training

Teams need to stay informed about ethical trends. Regular workshops on bias, privacy laws, and inclusive design ensure that everyone understands their role in maintaining ethical standards. Knowledge is the first line of defense against unintentional harm.

Cross-Functional Collaboration

Ethics cannot be siloed within the design team. Legal, engineering, and marketing must all be aligned on these principles. Engineering needs to understand the privacy implications of their architecture. Marketing needs to understand the boundaries of their messaging. Design needs to ensure the interface reflects these agreements.

Accountability Mechanisms

Who is responsible when something goes wrong? Clear lines of accountability ensure that ethical lapses are addressed quickly. Establishing an ethics board or a designated privacy officer within the organization can provide oversight and guidance.

🚀 Moving Forward with Integrity

The digital products we create have real-world consequences. They shape how people communicate, work, and interact with the world. As designers, we hold the keys to these experiences. With that power comes the obligation to ensure those experiences are safe, fair, and respectful.

Privacy and bias are not static targets. They require constant vigilance and adaptation. By prioritizing these considerations, we build products that not only function well but also do good. We create digital spaces where users feel seen, heard, and respected.

This is the standard we must strive for. It is not about perfection, but about progress. Every decision made with integrity adds to a healthier digital ecosystem. Let us move forward with the understanding that our work impacts lives, and choose to build with care.